- Blog

- Dbschema pricing

- Navicat premium nulled

- Hill climb racing 2 mod apk 1-48-2

- Curse for mac

- One spirit martial arts

- Fortune teller origami

- Dont fear the reaper lyrics

- Games snow bros 2

- Jquery credit card validator example

- Daemon x machina baixar

- Opensong create set

- Istat menus helper

- Financial newsletters

- Dying light the following the spotlight edition

- Dammit doll pattern

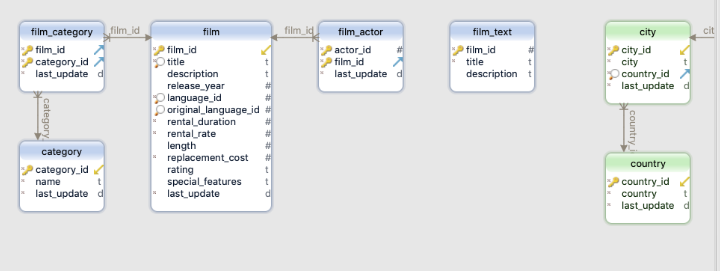

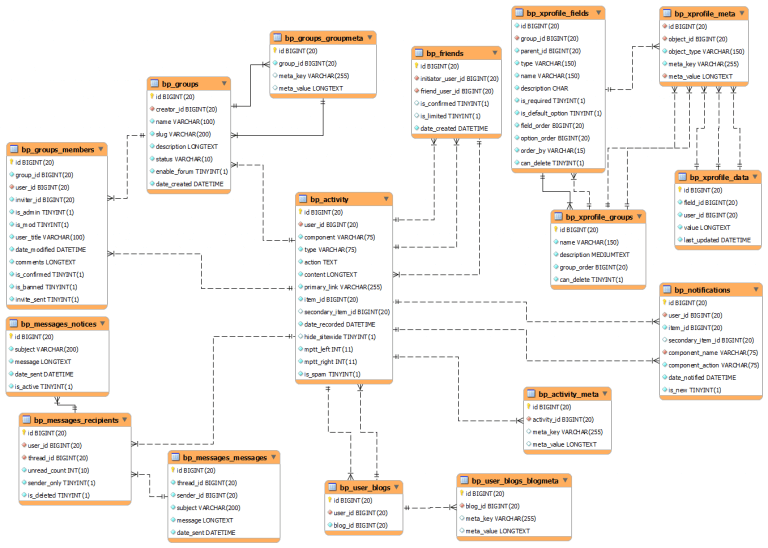

(The second one will also needed to be further split into and but we can deal with that later). We want to produce two tables, the first one having only, while the second one should get. | ID | int(11) | | PRI | NULL | auto_increment | | Field | Type | Null | Key | Default | Extra | Having a table MP3 with these fields mysql> describe MP3 create the lookup table CREATE TABLE lookup ()Īs you can see, the entire operation is run in the server workspace, thus avoiding problems of (a) fetching records (less network traffic), (b) handling data conversion, (c) memory occupation and (d) efficiency. The algorithm is based upon the fact that a table created from a SELECT DISTINCT statement is guaranteed to have a direct relationship with each record of the source table, when compared using the same elements we considered in the SELECT DISTINCT. Let the database engine do its job, and have Perl drive it towards the solution we need to achieve. And it can also easily make a join between source and lookup table. It belongs in the database engine, which can easily, within its boundaries, identify unique values and make a lookup table out of them. Finally, I would say that this kind of task is not your job. Everything can be solved, but it could be a heavy overhead for your sub.Ĥ. Not always an issue, but think about the handling of floating point fields and timestamp fields with reduced view. We need to fetch data from the database, eventually transform it into suitable formats for our calculation and then send it back, re-packed in database format. It is not a good first step toward re-utilization.ģ. To implement this algorithm, we need to include details specific to our particular records in our code. A hash of 2_000_000 items is unlikely to handle memory efficiently in most nowadays desktops.Ģ. However, even the size of the hash could prove to be too much for our computer memory.

This kind of solution would have crashed any program trying to load them into memory.) Instead of reading the source table into memory, we could just read the records twice from the database and deal with them one at the time. The source table can be very large (and some of the table I had to normalize were indeed huge. I can find four objections against such attempt:ġ. for each key in the hash, write the values to a lookup table

Dbschema pricing plus#

Previously saved hash and save the non-lookup fields plus the again, for each record, find the corresponding value in the Moved into a lookup table and store it in a hash for each record, identify the unique value for the fields to be You might be tempted to solve the problem with something like this: I. To replace repeating fields with a foreign key pointing to a lookup table, you must be sure that for each distinct set of values you have a distinct foreign key. The concept behind DBSchema::Normalizer is based upon some DBMS properties. The module is capable of re-creating existing indexes, and should deal with complex cases where the replaced fields are part of a primary key. There is no need to specify existing details. All information is taken from the database itself.

Simply put, it will create a lookup table out of a set of repeating fields from a source table, and replace such fields by a foreign key that points to the corresponding fields in the newly created table. Use DBSchema::Normalizer qw(create_lookup_table create_normalized_table) ĭBSchema::Normalizer is a module to help transforming MySQL database tables from 1st to 2nd normal form. # especially useful if one of them is an intermediate step.

# creating the lookup table and normalized table separately, # Alternatively, you can have some more control, by Lookup_fields => $lookupfields, # comma separated list Convert a table from 1st to 2nd normal form SYNOPSIS # the easy way is to give all parameters to the constructor Type 'undefined' is not assignable to type 'License'.NAME DBSchema::Normalizer - database normalization. Type 'undefined' is not assignable to type 'License & Pick'. Db.ts import | undefined' is not assignable to type 'License & Pick'.

- Blog

- Dbschema pricing

- Navicat premium nulled

- Hill climb racing 2 mod apk 1-48-2

- Curse for mac

- One spirit martial arts

- Fortune teller origami

- Dont fear the reaper lyrics

- Games snow bros 2

- Jquery credit card validator example

- Daemon x machina baixar

- Opensong create set

- Istat menus helper

- Financial newsletters

- Dying light the following the spotlight edition

- Dammit doll pattern